SALLO is an open source suite of tools designed to simplify the development of psychophysical experiments focused on spatial orientation in Unity projects. It is built on the understanding that psychophysical experiments can be divided into four essential sub-elements:

Stimulus: Define and manipulate the visual or sensory stimuli presented to participants.

Task: Implement experimental tasks that participants need to perform in response to the stimuli.

Psychophysical Method: Apply various psychophysical methods to quantify perceptual experiences.

Spatial Positioning: Control the spatial arrangement and positioning of elements within the virtual environment.

Therefore, SALLO includes template game objects and base components that cover each sub-element of the psychophysical experiment. The tools included in SALLO provide a reference base that developers can customize easily to their needs, so as to speed up and simplify the experiment development. SALLO is manained on github at the following link: https://github.com/DavideSpot/SALLO, and it is openly available under GPL-3.0 license.

ARENA2D is a novel device that provides auditory feedback. This novel technological solution allows the serial emission of spatialized sounds. The hardware was developed by the Electronic Design Laboratory (EDL) Unit of the Italian Institute of Technology (IIT) in Genoa. ARENA2D is a vertical array of 25 haptic blocks arranged in the form of a matrix (50 x 50 x 10 cm). Each block (5 x 5 cm) is a 4 x 4 matrix of tactile sensors or pads (2 x 2 cm each) provided of a speaker in its center. One of the most important hallmarks of this device is its modularity. The blocks are connected in cascade through USB cables and can be released from each other up to a distance of 20 cm. Furthermore, all the blocks can be programmed independently (for instance, the user can set different sounds for each block). ARENA2D weighs 12 Kg.

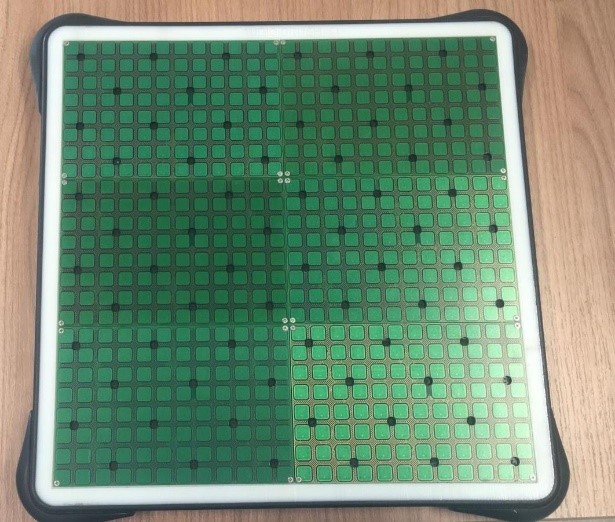

Audiobrush is a tablet (35 x 35 cm, see Figure 2.11) that allows serial and parallel emission of spatialized sounds. The tablet is composed of 576 capacitive sensors. The auditory files have a fixed format: 8bit/sample, 22050 Hz, mono. The system is composed of six TAT1 and TAT2 boards, managed locally by a Raspberry board (SO Linux) and through WiFi by a PC or a smartphone. The Raspberry looks for a WiFi network to connect with (named "AudioBrush"). The data are exchanged with the Raspberry at a fixed address. The TAT1 is composed of 96 tactile sensors (pads) and 12 speakers. It is connected to the TAT2 that provided the power supply, the connection to RS485 port, and the auditory signal to be emitted. The TAT2 boards control the TAT1 boards but are also provided of voltage regulators for the whole device and RS232/RS485 converters.

The “ABBI” project (FP7-ICT-2013-10-611452) comprised the development of ABBI-K, an innovative portable system consisting of a briefcase with a set of customized loudspeakers controlled by a dedicated Android application via Wi-Fi™ and two ABBI devices, with the main aim to provide a more accessible and intuitive tool to simplify testing procedures.

ABBI-K's main intent is to overcome the limits of previous experimental systems to assess spatial and motor skills in visually impaired children and adults under rehabilitative training with ABBI. The system includes 10 independent Wi-Fi™ loudspeakers, a smartphone with the “ABBI-K” Android application, two hub USB 7-ports rechargers with relative power supplies to recharge simultaneously multiple devices, and two ABBI devices. The smartphone is unusable to call, send SMS and mobile data connection, to be dedicated only to experimental purposes. In this way, the system replaced a PC with a smartphone with an easy-to-use application onboard.

The Rotational-Translational Chair (RT-Chair) is a 2-degrees-of-freedom motion platform. This device allows the investigation of the vestibular system, which perceives the angular and linear acceleration of our head in space, providing information about self-motion in absence of other cues such as vision or audition. The RT-Chair can provide 360 degrees endless rotations along the earth-vertical axis and linear translations up to 200 cm, and it can combine both movement types contemporary. This newly developed device not only helps neuroscientific investigation, but it can also have multiple applications. If combined with virtual reality (VR), it may increase VR experience by providing smooth and vibration-free movements and reducing the sensation of cybersickness. In addition, it may be installed in research facilities and hospital to perform assessment and rehabilitation for patients with vestibular disorders.

The VRCR is a virtual reality-based serious games platform for the assessment of head-trunk motor coordination, sensitivity to altered head-trunk sensori-motor contingencies (perception-action associations), and spatial perception asymmetries. Practically speaking, it is a set of custom piloting games in archery style, where the participant can steer the arrow using the head, the trunk or a combination of both the body parts. We are currently maintaining two versions of the platform: one purely visual and one purely acoustic.

Caterpillars are multi-sensory systems providing visual, acoustic, and haptic stimulations. These

devices give the opportunity to study multi-sensory integration taking into account spatial and

temporal features of the stimulus by considering both the extra-personal and the body space.

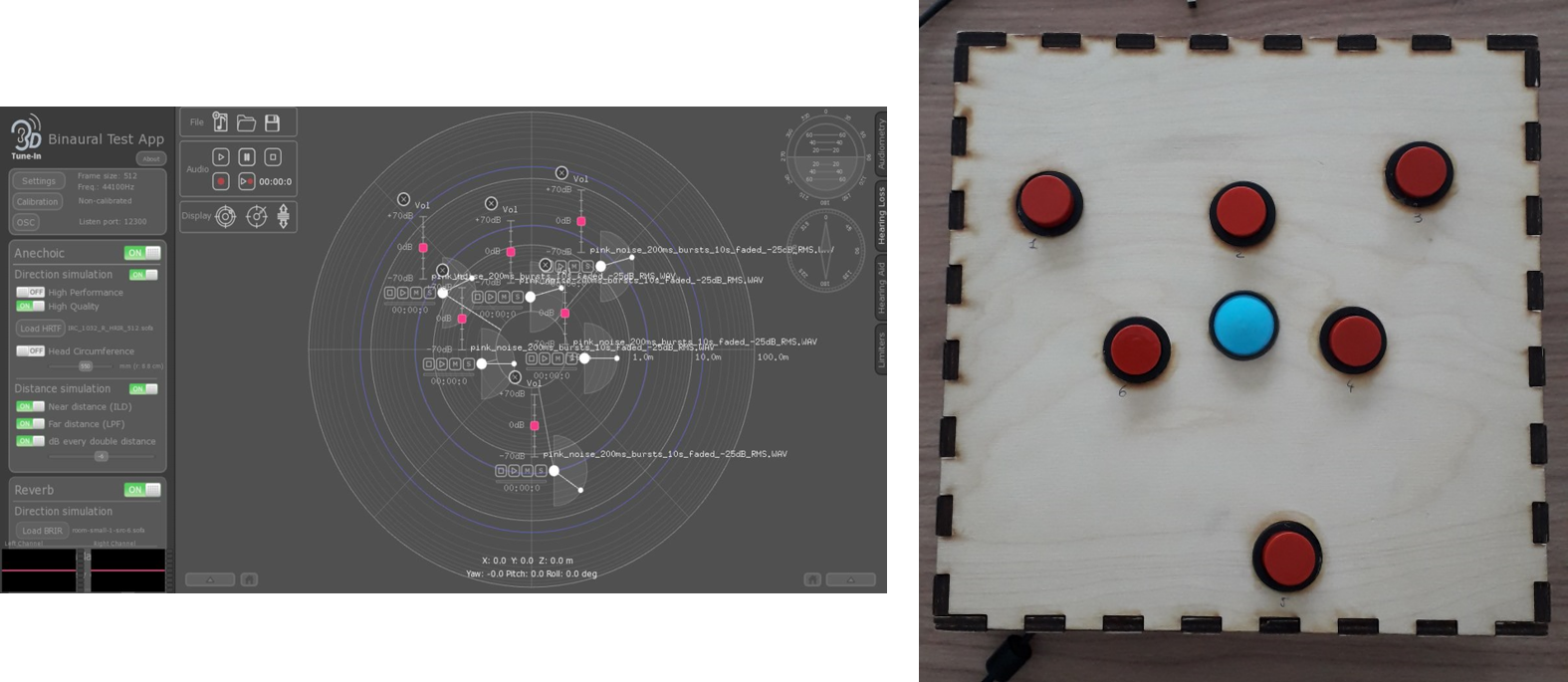

The system allows the emission of spatialized sounds through headphones and the possibility to register the perceived locations with an Arduino Uno based keyboard. The user can listen to acoustic stimuli spatially displaced with an open-source library for audio spatialization and simulation of hearing loss and hearing aids. The keyboard, designed in AutoCAD, was built as a laser-cut wooden box with a set of buttons on the top surface.

RoMAT is a novel robotic platform suitable for investigating perception in multisensory motion tasks for individuals with and without sensory and motor disabilities. The system, allows the study of how multisensory signals are integrated, taking into account the speed and direction stimulation of the stimuli. It is a robotic platform composed of a visual and tactile wheel mounted on two routable plates to be moved under the finger and the visual observation of the participants. This device can be a potential system to design screening and rehabilitation protocols based on neuroscientific findings to be used in individuals with visual and motor impairments.