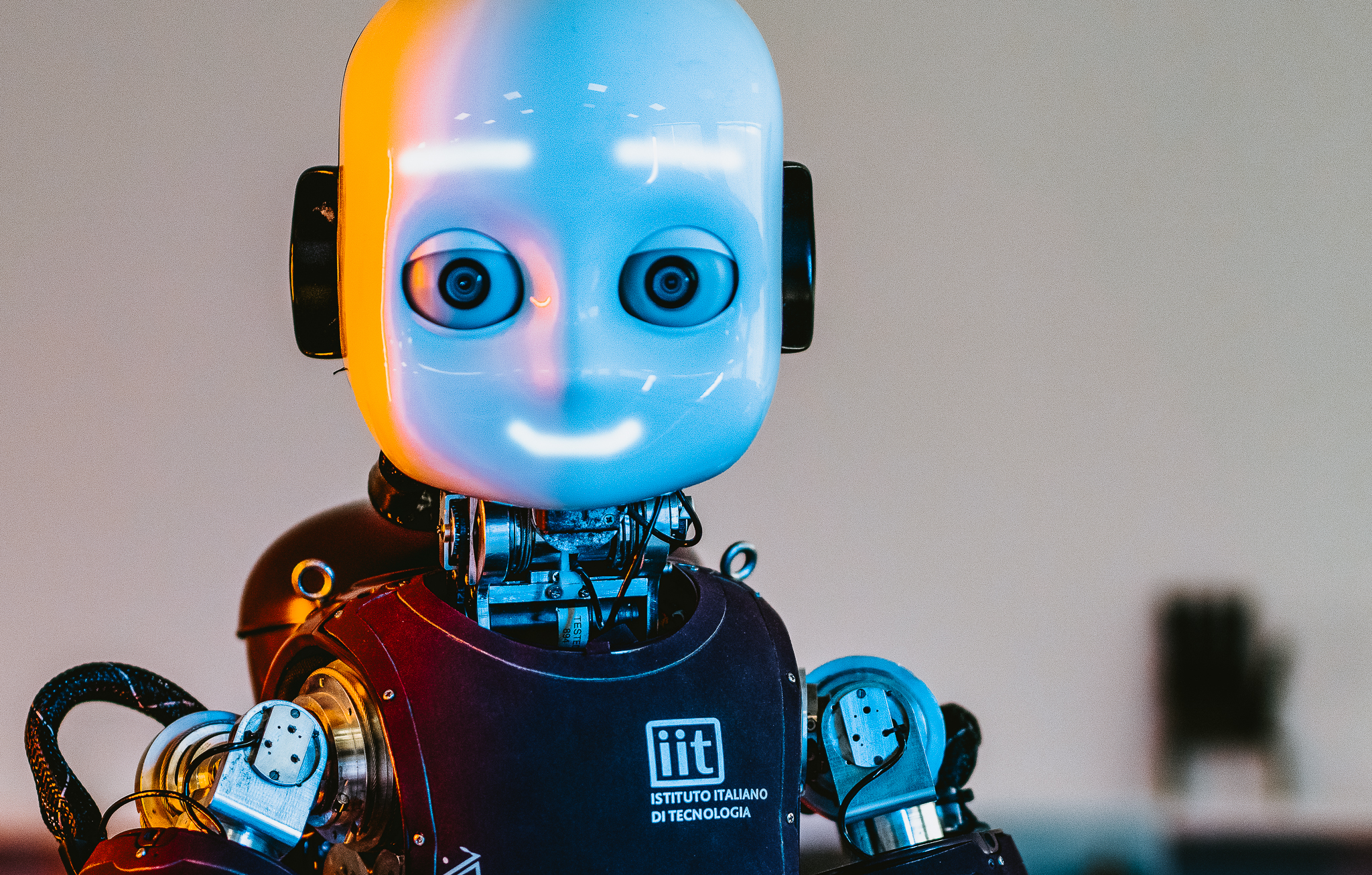

Event Driven Perception for Robotics

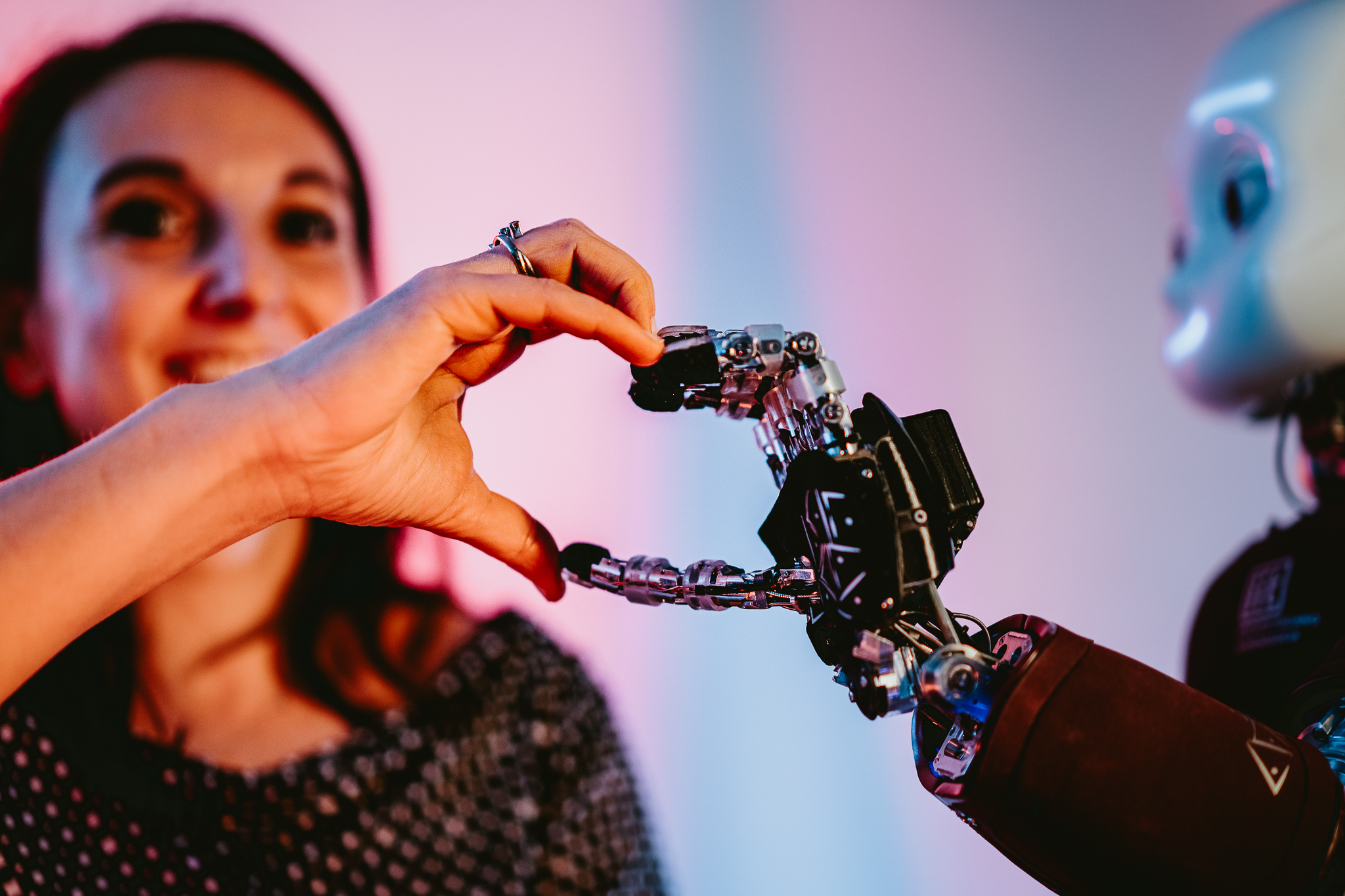

We exploit the event-driven sensing and neuromorphic processing to endow robots with improved energetic and behavioural autonomy

Bio-inspiration to exploit neural computational primitives implemented on neuromorphic hardware is our focal point. We also explore engineered methodologies for event-driven processing with hard real-time constraints.