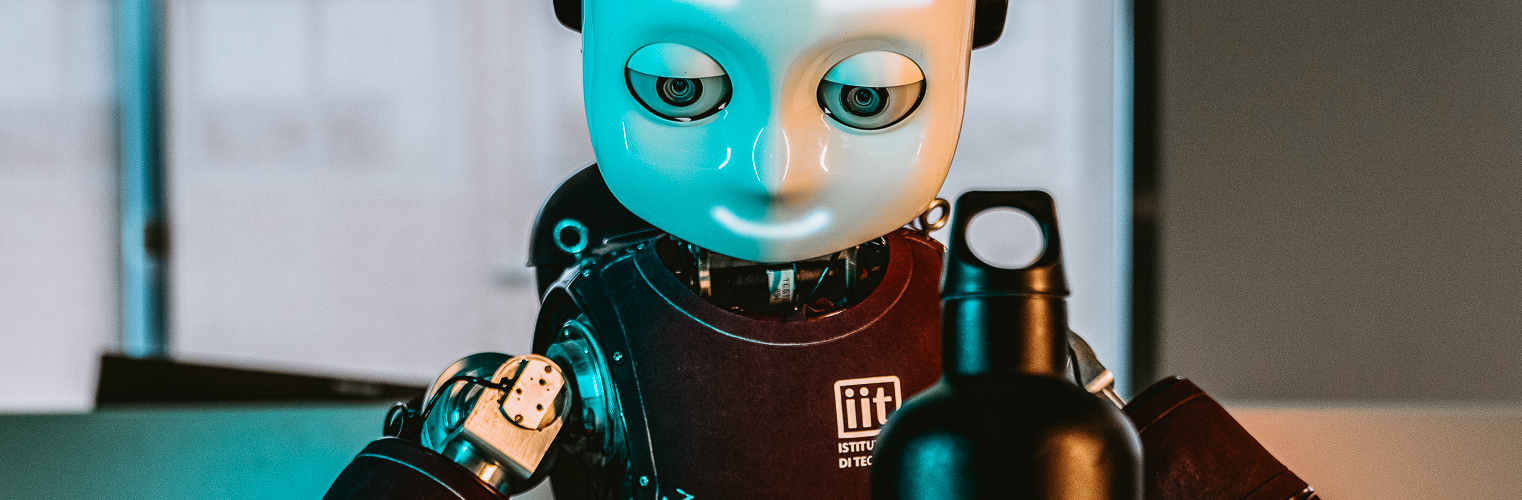

To better interact with its environment, an intelligent system must be capable of identifying the items around it. In the context of a humanoid platform, the capability of detecting and recognizing objects is paramount for a smooth integration to real life scenarios. The goal of this project is therefore providing iCub with this capability only relying on the information coming from the event driven cameras mounted in its eyes. To do so, we use Spiking Neural Networks (SNN), in the attempt of building a purely event driven framework.

The results of our experiments show promising recognition capabilities on a dataset collected showing a bunch of objects to the robot cameras. The training of the network is carried out using a back-propagation technique which relies on surrogate gradients to approximate the spike differentiation. Using such technique we can count on GPU-based training library such as PyTorch. In the figures we can see a data sample, as well as the test accuracy during training. At the same time, the learning rules used in this project, map on neuromorphic hardware (such as Loihi), going towards a fully spiking implementation.

Spiking Neural Networks, PyTorch, Deep Learning, Surrogate gradient, GPU

Emre Neftci - Forschungzentrum Jülich

Iacono M., Weber S., Glover A., Bartolozzi C. Towards Event-Driven Object Detection with Off-the-Shelf Deep Learning IEEE International Conference on Intelligent Robots and Systems

DOI